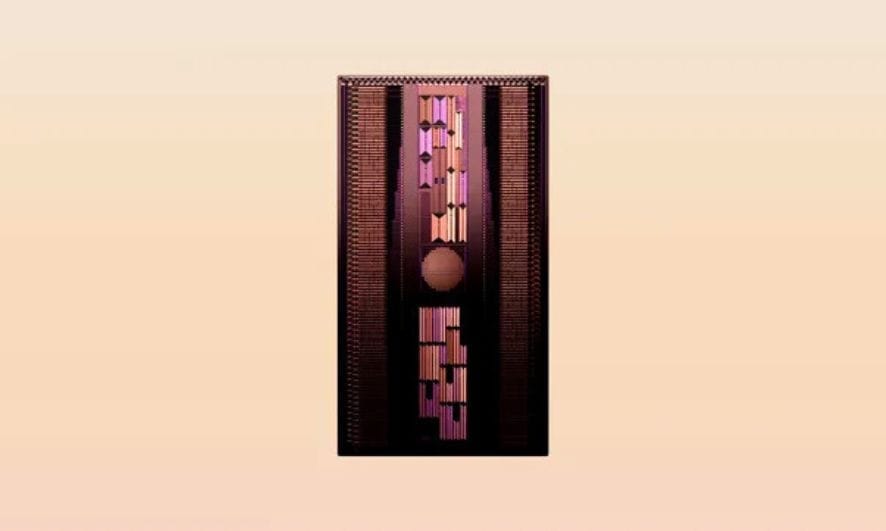

OpenAI working on new reasoning technology under code name ‘Strawberry’

OpenAI is working on a groundbreaking AI initiative called “Strawberry.” This ambitious project seeks to enhance the reasoning capabilities of AI models, pushing beyond their current limitations.

While details about Strawberry remain largely confidential, the project promises significant advancements. Basically, in how AI can autonomously navigate the internet for in-depth research.

Teams inside OpenAI are working on Strawberry, according to a copy of a recent internal OpenAI document seen by Reuters in May. Reuters could not ascertain the precise date of the document, which details a plan for how OpenAI intends to use Strawberry to perform research. The source described the plan to Reuters as a work in progress. The news agency could not establish how close Strawberry is to being publicly available.

How Strawberry works is a tightly kept secret even within OpenAI, the person said. The document describes a project that uses Strawberry models with the aim of enabling the company’s AI to not just generate answers to queries but to plan ahead enough to navigate the internet autonomously and reliably to perform what OpenAI terms “deep research,” according to the source.

This is something that has eluded AI models to date, according to interviews with more than a dozen AI researchers. Asked about Strawberry and the details reported in this story, an OpenAI company spokesperson said in a statement: “We want our AI models to see and understand the world more like we do. Continuous research into new AI capabilities is a common practice in the industry, with a shared belief that these systems will improve in reasoning over time.”

The spokesperson did not directly address questions about Strawberry. The Strawberry project was formerly known as Q*, which Reuters reported last year was already seen inside the company as a breakthrough.

Two sources described viewing earlier this year what OpenAI staffers told them were Q* demos, capable of answering tricky science and math questions out of reach of today’s commercially-available models.

A different source briefed on the matter said OpenAI has tested AI internally that scored over 90% on a MATH dataset, a benchmark of championship math problems. Reuters could not determine if this was the “Strawberry” project.

On Tuesday at an internal all-hands meeting, OpenAI showed a demo of a research project that it claimed had new human-like reasoning skills, according to Bloomberg, opens new tab. An OpenAI spokesperson confirmed the meeting but declined to give details of the contents. Reuters could not determine if the project demonstrated was Strawberry.

OpenAI hopes the innovation will improve its AI models’ reasoning capabilities dramatically, the person familiar with it said, adding that Strawberry involves a specialized way of processing an AI model after it has been pre-trained on very large datasets.

Researchers Reuters interviewed say that reasoning is key to AI achieving human or super-human-level intelligence.

While large language models can already summarize dense texts and compose elegant prose far more quickly than any human, the technology often falls short on common sense problems whose solutions seem intuitive to people, like recognizing logical fallacies and playing tic-tac-toe. When the model encounters these kinds of problems, it often “hallucinates” bogus information.

AI researchers interviewed by Reuters generally agree that reasoning, in the context of AI, involves the formation of a model that enables AI to plan ahead, reflect how the physical world functions, and work through challenging multi-step problems reliably.

Improving reasoning in AI models is seen as the key to unlocking the ability for the models to do everything from making major scientific discoveries to planning and building new software applications.

OpenAI CEO Sam Altman said earlier this year, opens new tab that in AI “the most important areas of progress will be around reasoning ability.”

Other companies like Google, Meta and Microsoft are likewise experimenting with different techniques to improve reasoning in AI models, as are most academic labs that perform AI research. Researchers differ, however, on whether large language models (LLMs) are capable of incorporating ideas and long-term planning into how they do prediction. For instance, one of the pioneers of modern AI, Yann LeCun, who works at Meta, has frequently said that LLMs are not capable of humanlike reasoning.

According to The Reuters